Ultimate Guide to A/B Testing for Google Ads

Testing is a fundamental part of marketing, because really, all advertising is part art and part science. You benefit from a combination of strategy, creativity, and ideally a solid foundation of data in order to create strong ads that convert.

That’s why A/B testing Google Ads is so crucial. And in this post, we’re going to go over exactly what A/B testing for Google Ads is, why it matters so much, what you should be split testing, and how to conduct tests that yield results.

What Is A/B Testing?

A/B testing is— which is also called “split testing” interchangeably— is the process of testing two different nearly-identical campaigns to see how small changes in a single variable impact your results.

And while you can split test nearly anything in a campaign, the most important part of A/B testing is changing just one single thing. This means you can split test the copy, or the audience you’re targeting, or the placements, or the images— but you shouldn’t tests them all at once. Otherwise, you won’t be able to track which variable impacted the results, and you may as well just be running two separate campaigns.

Why Does A/B Testing Matter for Google Ads?

A/B testing is a vital part of Google Ad management and optimization. Every good agency and strategy should account for A/B tests, both in the total number of campaigns and in the ad budget.

A/B testing is the only way to determine how every aspect of your ad campaigns is actually affecting your results. Without it, you’re mostly making educated guesses, which may be accurate but also could wild up being dead wrong. Only with actual data can you properly optimize your campaigns, develop new winning strategies and campaigns, and maximize both ROI and ROAS.

At the end of the day, every business, industry, and audience is completely different from the next, so there’s no way to know what truly works for your business without A/B testing. You don’t just want to rely on general best practices and hope that you’re implementing them correctly, you want to know that you’re actually getting it right.

What Should I A/B Test in Google Ads?

You should ultimately be A/B testing every single aspect of your Google Ads, even though this will take some time and effort. Let’s take a look at what you should test, and ideally how.

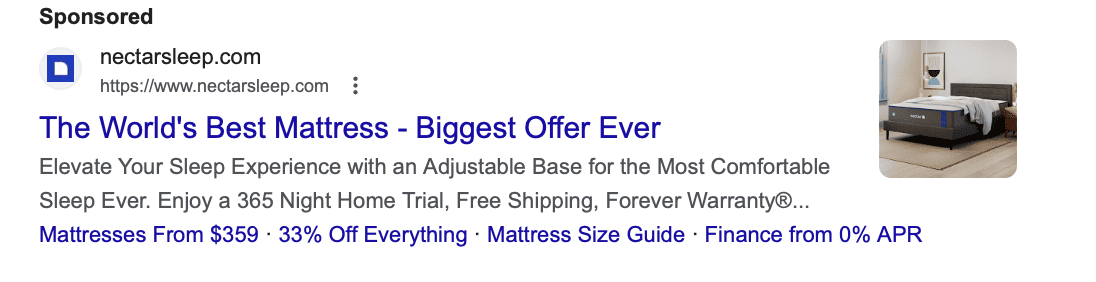

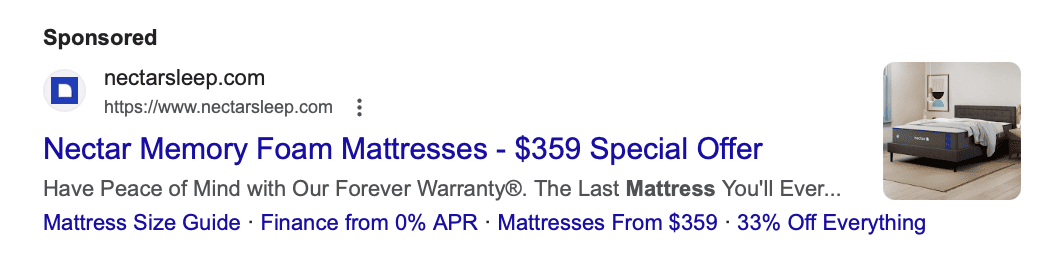

Your Copy

If you need help with copywriting, check out our Ultimate Guide to Google Ads Copy. Once you do that, it’s time to actually test the copy that you’ve got!

Create A/B tests that assess the following:

- The pain points you focus on in the copy

- The benefits you focus on

- The length or style of the copy

- Your CTA

You want to test both headlines and ad descriptions; you can also create different ad assets (previously “extensions”) to test as well.

Your Visuals

Not all ad types have Google Ads include images— Google Search Ads, for example, are text-based. Display Ads, Shopping Ads, and Performance Max campaigns, however, all include visuals.

While we already know that you should have multiple types of images for each campaign, including images of all dimensions, it can be helpful to run dedicated tests to learn more about what visuals users respond to based on the type of campaign and the stage of the funnel.

If you sell framed photographs online, which do people respond to more:

- An image of someone framing a picture

- A still of a photo in the frame, or multiple framed photos with distinct frames

- A video of someone framing a picture

- An image of someone hanging a framed picture

- Images and videos with certain colors

- Images or videos with different amounts of overlay text

Your Audience Targeting

If you’re using audience targeting for your ad campaigns— which is particularly central with Display Ads, but can be used across multiple campaign types— you want to test it.

You should be testing:

- How audience layering impacts your ad campaigns

- Which audience targeting options are impacting your campaigns (including increasing or restricting reach and how that impacts lead/click quality/budget)

- How both positive and negative (aka restrictive) audience targeting impacts your campaigns

Your Product Descriptions

Running Shopping Ad campaigns? You’ll want to make sure that your product descriptions are well-optimized for Google.

You can read more in our Shopping Ads Guide Optimization Chapter to learn more about how you can test and optimize your product listings.

Your Bid

Testing your bid (and even your bidding strategy) can be an important part of making sure that you’re truly maximizing your return on ad spend (ROAS).

You want your bid to be high enough that you’re dominating a solid chunk of the impression share and that your ad is ranking towards the top of ad results. You don’t, however, want to be bidding so much that you’re just tossing money away and unnecessarily increasing your customer acquisition costs (CAC).

So, test your bid, and your bidding strategy (in separate A/B tests, of course). See how it impacts your reach, your total number of clicks, and the quality of traffic being sent to your site.

Does Google Ads Have a Native Split Testing Feature?

Google does have a native split testing feature designed to make A/B testing much more effective and straightforward for businesses. Some ad managers— who have been around before the split testing feature— may prefer to do manual tests, but the native testing feature certainly saves time and comes with its own advantages.

Google’s split testing feature is available through Google Experiments in the Ad Manager. It’s important to note that it’s only available for Shopping, Display, Video, and Hotel Ads campaigns— it’s not currently available for App or Shopping campaigns.

Also important to note:

- You can schedule up to 5 experiments for a single campaign, but only one will run at a time

- It can take some time for the ads to complete the review process and run, which often varies based on teh size of your original campaign, so schedule them in advance

- You get control over how much of your original campaign budget you want to allocate to each individual experiment while they’re running

- In Display campaigns, they use cookie splits to ensure that users only ever see the experiment or your original campaign; in Search ads, you can choose between a search-based split or a cookie split

The Step-by-Step Process to A/B Testing on Google Ads

We’re going to walk you through how to A/B test Google Ads both with Google Experiments and with a more manual approach so you can run tests however you prefer. While we do recommend using the Google Experiments feature for all eligible campaigns, it’s ultimately up to you and any split testing is better than none.

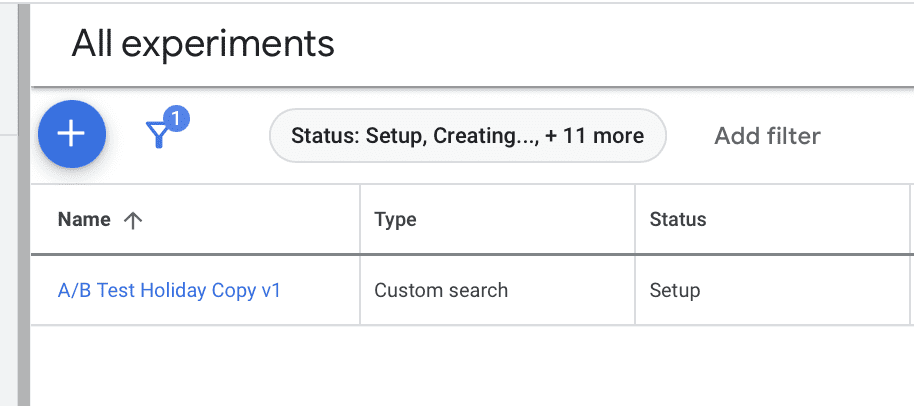

A/B Testing with Google Experiments

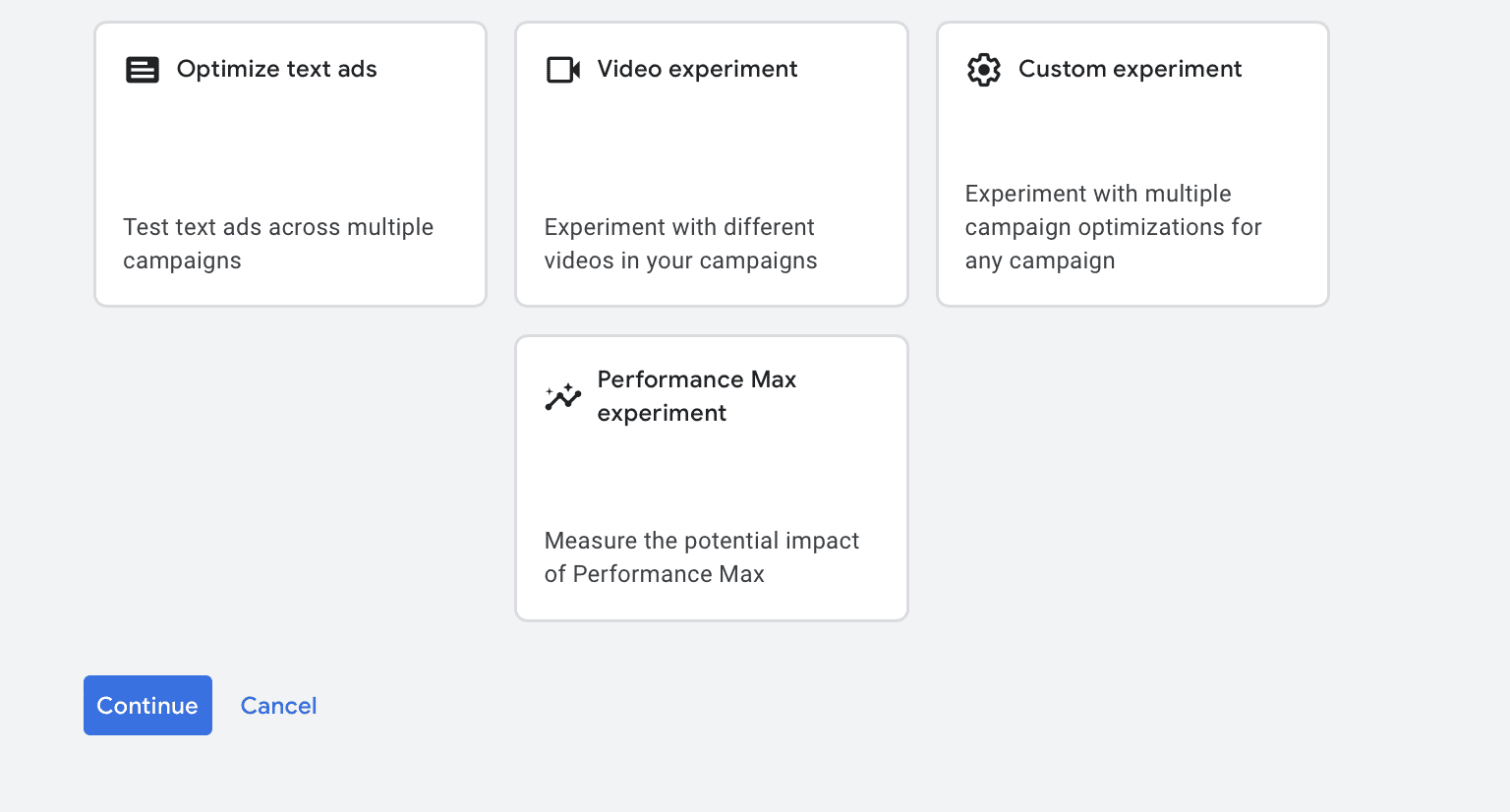

To get started, head to Google Experiments. This can be found under the Campaign tab. You can click the blue + under “All Experiments” to create a new A/B test.

Google will ask you what you want to test. You can optimize test ads, run a video experiment, run a Performance Max experiment, or set up your own. For this example, we’re going to optimize text ads.

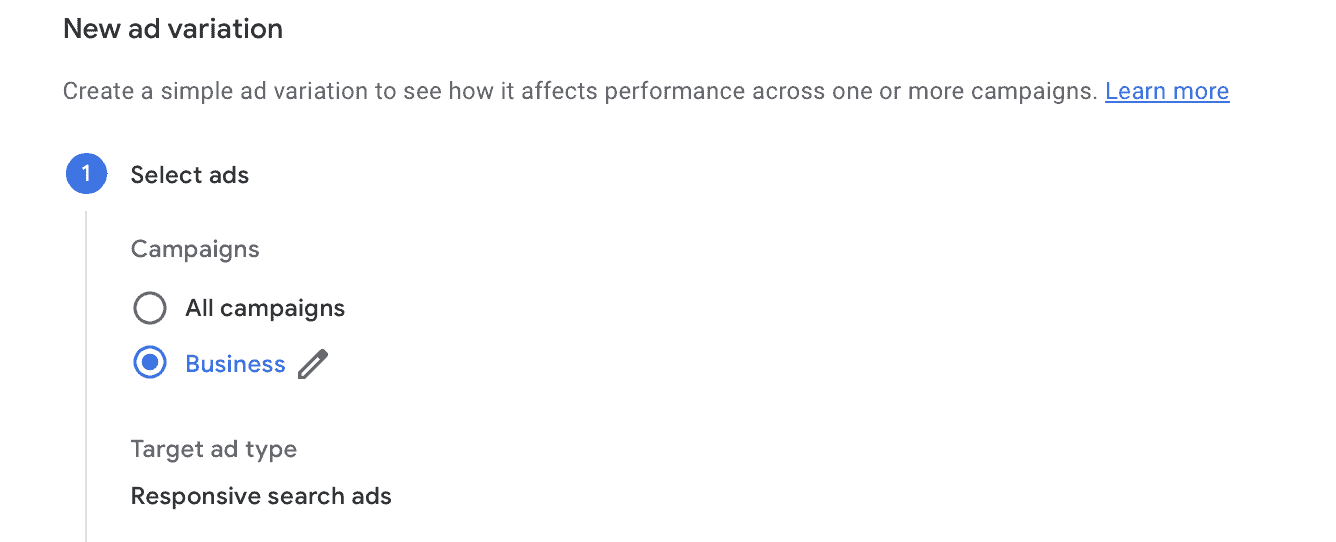

Next, Google will ask you to create an ad variation to see how it impacts performance. You can choose to run the variation across all of your campaigns, a specific campaign, or all campaigns that meet specific criteria. You can then choose iwhat ad type you want to target (we choose Responsive Search ads).

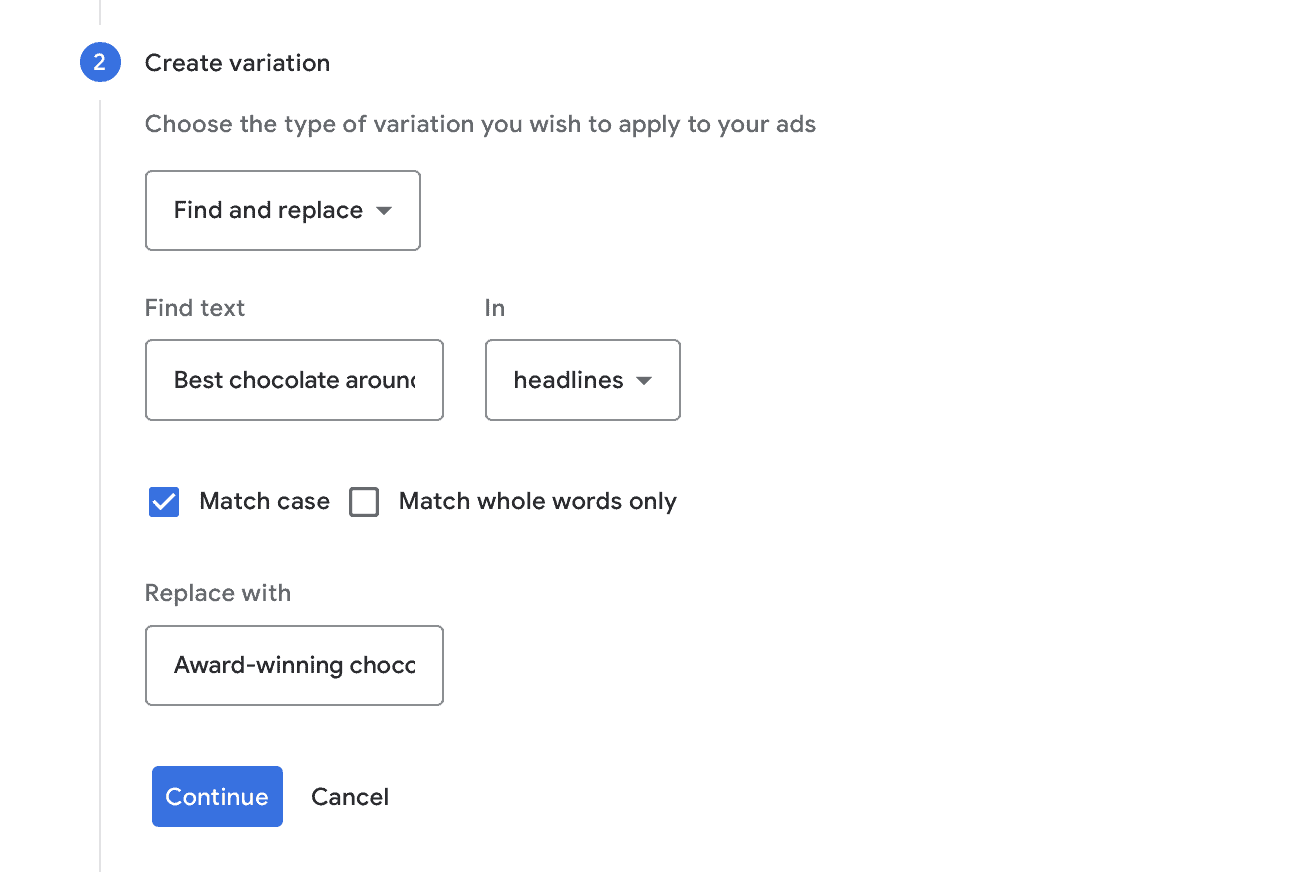

Next, you’ll choose how you want to go about testing the ad text. You can choose, for example, to find and replace specific text with new options, and you can do this in headlines or the ad copy. So here, we’re swapping “Best chocolate around” with the phrase “Award-winning chocolate” in our ad headlines. We’re choosing to have it Match case, but you can also have it match whole words.

Next, you’ll name the variation— make sure it’s something that’s easily identifiable and easy to remember, especially if you’re running a large number of A/B tests for Google Ads. Then you choose a start date and set the “experiment split” which determines how much of the time your test ad will be shown to users.

The default is 50%, and that’s a good way to get an unbiased sample if you think the test will help with optimization, but if you’re testing a high-performing, high-traffic campaign that you mostly want to leave alone, you can lower it significantly.

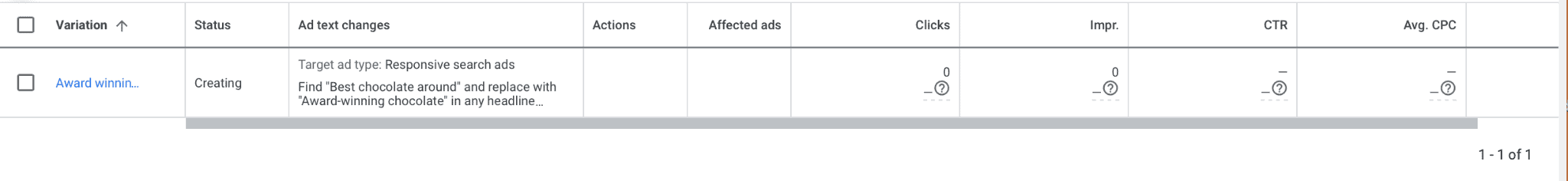

Once your test is submitted, you’ll see it under your Experiments tab. You’ll see which campaigns it applies to, and once the test starts, you’ll be able to see how the results measure up between the two ad variations.

A/B Testing Google Ads Manually

If you want to test a campaign type— or an aspect of a campaign— that Google doesn’t support with Experiments, you can take the manual approach to Google Ads A/B testing.

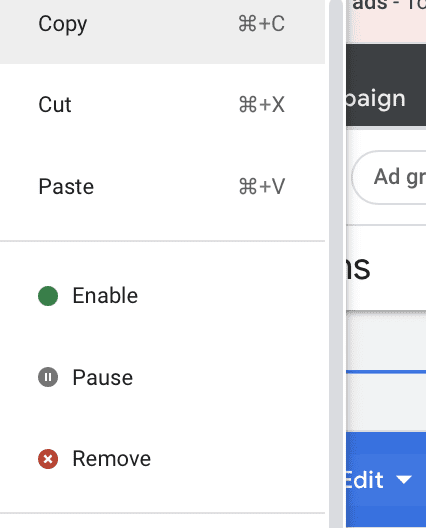

This is pretty straightforward. Go to your Campaigns page, and then click on the checkmark next to the campaign you want to test. You’ll then click “Edit” in the blue highlighted bar at the top of this dashboard.

This will open a drop-down menu. Select “Copy.”

You’ll then re-open the drop-down Edit menu and select “Paste.” It may take a few minutes, but your campaign will duplicate.

Once it does, you’ll see it listed as “[Original campaign name] #2” in the dashboard. Click to edit it, and then change whichever variable you want to test.

One thing to note here: It will duplicate your entire campaign, including your budget. If you only have, for example, $12 a day to spend on this particular campaign, you’ll want to edit both budgets to ensure that you’re staying on track with ad spend.

Once the duplicate campaign is running, keep an eye on it from anywhere between 2-4 hours to see how it performs compared to your original ad. And when you’re ready for the next A/B test, create another duplicate and start the process again.

How to Evaluate Split Tests With Google Ads

When you’re looking at A/B tests from Google Ads, you need to know how to analyze the data in question.

To start, you never want to find yourself in a position where you’re only looking at the number of clicks on an ad campaign. While click-through rate (CTR) is an important metric, it’s not the only one that matters. You may be getting more clicks, for example, or fewer conversions.

Ultimately, you want to find campaign changes that help you to increase your ROAS. Which ad changes are driving more high-quality traffic that’s converting? And at what cost?

We recommend the following:

- Campaigns that drive the highest number of clicks from high-quality traffic should be prioritized

- As long as we’re staying within budget, it’s often worth it to pay slightly more for high-quality clicks than lower-quality clicks

- Maintaining a strong percentage of the impression share is good, but we don’t want to overspend and burn through ad spend too quickly

You need to look at the entire picture when assessing your Google Ads A/B test results. Without that, you may be optimizing for the wrong thing.

Google Ads Split Testing FAQ

Still have questions on Google Ads split testing? We’ve got answers!

How Much Should I Spend on Split Testing?

We recommend allocating a chunk of your budget to A/B tests; in many cases, at least 5-10% of the budget should go towards dedicated testing. In some cases it makes sense to allocate 50% of a specific campaign’s budget to its A/B test, but in other cases you may want to keep it closer to 20%.

A great deal of this depends on your specific campaign, your total budget, and how aggressively you want to optimize your campaigns.

Do I Need to A/B Test Successful Campaigns?

While you may create campaigns that are “successful” by baseline standards in that they drive results at an acceptable cost, A/B testing is key to long-term optimization and success. It will help you understand why those initial campaigns were successful and how to replicate the success— or heighten it— in the future.

How Long Should My A/B Tests Run?

A/B tests need time to run so that Google get up to speed and serve it to the right audiences. We recommend leaving A/B tests on for at least 2-4 weeks unless you’re seeing truly disastrous results after the second week.

How Often Should I Conduct A/B Tests?

You should be conducting A/B tests regularly, even on established campaigns. You may have new ideas to test, and it’s always important to remember that Google, your audience, and the market itself is always changing and evolving. Testing will help you stay at the top of your game.

What if I Don’t Trust the Results of a Split Test?

Believe it or not, this happens sometimes. We’ll run a test just for the sake of being thorough or during troubleshooting and get results back that are surprising. Our click-driving branded copy gets dethroned for something that seems more basic, or we see the number of clicks decrease based on a specific action.

Sometimes A/B tests surprise us, which is why it’s so important to run them. But sometimes, if you really don’t trust the results of a single A/B test, you can trust your gut. Run another test with a separate campaign and see if you can duplicate the results, or keep the test running for a little while longer. There could be a change in the marketplace or some sort of fluke that causes a short-term impact on results.

Final Thoughts

Your Google Ads theories are only as good as the data that backs them up, in many case, and split testing can really help with that. If you want to understand why your campaigns are performing the way they are and have concrete data about what you can do to optimize them, A/B testing is a necessity.

If the thought of constantly testing and optimizing your campaigns feels overwhelming, you’re not alone. It’s one of the reasons so many clients come to us. We’re able to leverage the most cutting-edge strategies and optimization tactics combined with A/B testing to help you get results.

Ready to have your ads tested and optimized? Get in touch with us here.

The Ultimate Guide to Google Ads Copy: Writing Messaging That Converts?

The Ultimate Guide to Google Ads Copy: Writing Messaging That Converts?